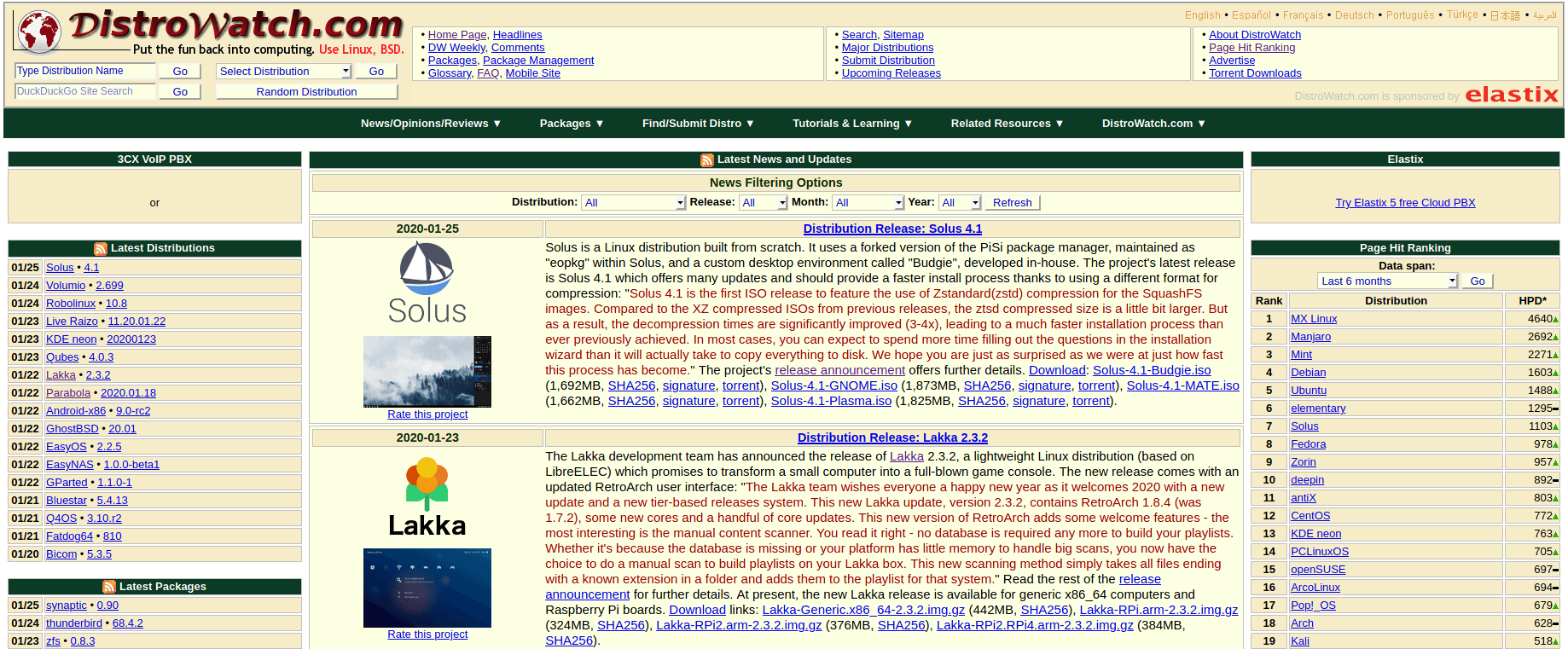

Distrowatch is one of the most famous websites about Linux distributions (and other Unix-like operating systems) that has been in service since 2001. It covers the new releases of a huge number of Linux distributions in its database, and also has a special “ranking” algorithm for those distributions.

What we unfortunately notice in a lot of discussions online about Linux distributions is that people tend to use that ranking in an attempt to figure out which distribution is more popular than the other. But this is totally wrong, as the Distrowatch’s ranking algorithm does not have any base that links it to the distribution’s users in reality. The website itself doesn’t claim that it is an actual correct ranking of Linux distributions.

In today’s article, we’ll quickly learn why this is the case.

How Does Distrowatch Rank Distributions?

Everything about the ranking algorithm of Distrowatch is written in their “Page hit ranking” page. The algorithm simply depends on the number of hits the distribution’s page receives per day, and it calculates only 1 hit per IP address per day (to prevent duplicates and spam).

This means that if you were searching about “Fedora” in Google, and came across the Fedora’s page on Distrowatch, the counter will simply get increased by 1.

Same goes if you were browsing the site yourself and clicked on the Fedora page, but you can contribute to the counter in a maximum of 1 hits per day as we previously said. No matter the other ways you access the Fedora page in the same day, it will be increased only 1 time (per your IP address).

You can see some possible options on the Distrowatch’s homepage to rank distributions in the latest 6 months, or 3 months, or 30 days or 7 days. All of these options are depending on the measured hits-per-day counter above.

Is This a Good Ranking?

Now, Distrowatch itself doesn’t claim that this is a reflective result of users of that distribution:

The DistroWatch Page Hit Ranking statistics are a light-hearted way of measuring the popularity of Linux distributions and other free operating systems among the visitors of this website. They correlate neither to usage nor to quality and should not be used to measure the market share of distributions. They simply show the number of times a distribution page on DistroWatch.com was accessed each day, nothing more.

And they are fully honest and correct. This ranking does not represent how popular a Linux distribution is at all, for many reasons:

- There’s no connection between the size of the user-base of a distribution, and the hits it gets on a 3rd-party Internet website.

- The number of hits itself goes up each time there’s a new release of that distribution, and likely stays still otherwise. This happens because Distrowatch publishes distributions’ new releases on its homepage, so if you are a daily Distrowatch reader, you would click on whatever is published today. So the distributions that produce more releases of them each few month are more likely to rank more in the Distrowatch ranking. This favors rolling-release distributions for example, while creating the illusion that the distributions which get released only each 6 months or 1 year are not that common.

- Again, this is only about Distrowatch readers, which probably do not exceed 0.000001% of all Linux users. So thinking that this metric can be considered a somehow correct ranking mechanism for Linux distributions’ popularity is far-fetched.

That’s why we urge you to never use such ranking in any discussion related to Linux distributions and their popularity. And if you see anyone trying to use it to rank distributions, just throw this article in their face and call out their BS.

What’s a Better Alternative to rank Distributions?

Right now, there doesn’t exist an easy way to measure how popular a Linux distribution is (from the users side). A good start would be to ask distributions to release the statistics of the number of downloads they are getting, but most Linux distributions simply won’t do it. The downloads number is also not that good to measure popularity, as a lot of people may download the same distribution many times, and even those who downloaded it one time, can possibly install it on tens of machines.

Another alternative could be releasing the hit statistics for the official distribution’s repositories. Almost every user may need to download a certain package or an update from the repositories at least once every few weeks, so if we could access the logs of how many unique IP addresses are accessing the distribution’s repositories mirrors per month for example, we may gain a good vision on how popular that distribution is.

While this alternative is theoretically good, the issue about it is that it won’t count offline installations. People from both sides can argue with strong reasons why offline installations are important or not important, but it leaves us in an issue anyway. Additionally, this would count Linux Mint users, Kubuntu users and Ubuntu MATE users all as Ubuntu users, simply because they are using Ubuntu’s official repositories, which is not a nice thing to have.

At the end, it sounds like each methodology has its own issues, but some are way more better than the other. Still, do not get tricked by people who try to use Distrowatch’s visitor statistics to rank all the Linux distributions out there.

Hanny is a computer science & engineering graduate with a master degree, and an open source software developer. He has created a lot of open source programs over the years, and maintains separate online platforms for promoting open source in his local communities.

Hanny is the founder of FOSS Post.